Pixeltrack: a fast adaptive algorithm for tracking non-rigid objects

| Home |

| Research |

| Publications |

| CV |

| Teaching |

| Software |

|

|

|

|

|

Abstract

In this paper, we present a novel algorithm for fast tracking of generic objects in videos. The algorithm uses two components: a detector that makes use of the generalised Hough transform with pixel-based descriptors, and a probabilistic segmentation method based on global models for foreground and background. These components are used for tracking in a combined way, and they adapt each other in a co-training manner.Through effective model adaptation and segmentation, the algorithm is able to track objects that undergo rigid and non-rigid deformations and considerable shape and appearance variations.

The proposed tracking method has been thoroughly evaluated on challenging standard videos, and outperforms state-of-the-art tracking methods designed for the same task. Finally, the proposed models allow for an extremely efficient implementation, and thus tracking is very fast.

Authors

Stefan Duffner, LIRIS, INSA de Lyon, France

Christophe Garcia, LIRIS, INSA de Lyon, France

Paper

S. Duffner and C. Garcia, Pixeltrack: a fast adaptive algorithm for tracking non-rigid objects, In Proceedings of ICCV, 2013 djvu bibtexDatasets

We used two evaluation datasets:- Babenko sequences: the videos that Boris Babenko et al. used for evaluating his MILTrack algorithm (IEEE TPAMI 2011, CVPR 2009).

- Godec sequences: the videos that Martin Godec et al. used for tracking non-rigid objects.

Annotation

The bounding box annotation in XML format can be downloaded from here.Code

C++ code using the OpenCV (2.4) library (tested under Linux):pixeltrack_v0.3.tgz.

This code is published under the GPLv3 license. Use this code for research purposes only. If you use it, cite the paper mentioned above.

Results

Here are some videos showing qualitive results comparing it to HoughTrack (Godec et al. ICCV 2011) and TLD (Kalal et al. CVPR 2010). For quantitative results please see the paper.- Tiger2 (3.6Mb)

- Gymnastics (13Mb)

- Skiing (2.0Mb)

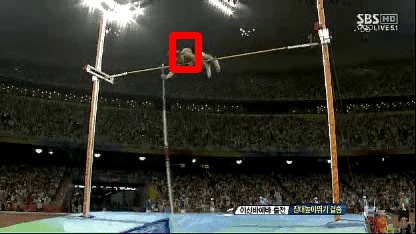

- High Jump (4.3Mb)

- Diving (9.1Mb)

- Motocross1 (6.3Mb)

- Our method uses a bounding box for initialisation, but the size and aspect ratio of the box are not updated during the tracking. Thus, in these videos it makes more sense to just display the current centre position (red cross).

- For HoughTrack, we use the initialisation provided by the authors.

- For TLD, we used the same initialisation as for HoughTrack. (We have tried the same as for PixelTrack but it performed worse.)

- TLD loses track very early in some of these examples with rotating and deforming objects.

- In motocross1.avi our method has some problems to adapt to the large appearance changes and rotation (HT provides better results here).